magic is real and its in a C compiler

2026-02-02

A while back I was obsessed with low level programming, I wrote my own little kernel that went nowhere and I learnt all kinds of assembly. During that phase, I learned that unless you are an ffmpeg dev there is no need for you to be hand rolling assembly. Modern compiler do some insane optimizations that seem incomprehensible, is it some kind of math trick or something specific to the hardware architecture? you never even know, but usually its a math trick.

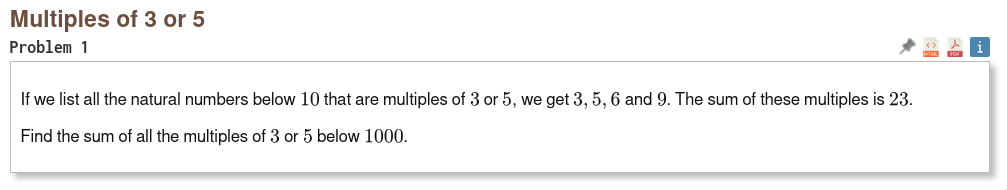

I really wanted to explore optimizations, and the end result was the I was somewhat able to write compiler grade assembly for a project euler problem, specifically the first one. The problem statement is quite simple.

even a novice should be able to solve this with much ease, all you do is check if it is a multiple of 3 or 5 and we add.

N = 1000

sum = 0

for i in range(N):

if i%3 == 0 or i%5 == 0:

sum+=i

print(sum)

In human-grade assembly, the solution would look something like this:

.file "euler1.s"

.text

.globl main

.type main, @function

main:

pushq %rbp

movq %rsp, %rbp

xorl %ecx, %ecx # i = 0

xorl %ebx, %ebx # sum = 0

.loop:

cmpl $1000, %ecx

jge .done

# check i % 3 == 0

movl %ecx, %eax

cdq

movl $3, %esi

idivl %esi

testl %edx, %edx

je .add

# check i % 5 == 0

movl %ecx, %eax

cdq

movl $5, %esi

idivl %esi

testl %edx, %edx

jne .next

.add:

addl %ecx, %ebx

.next:

incl %ecx

jmp .loop

.done:

movl %ebx, %eax # return sum

popq %rbp

ret

And while this may seem completely unreadable, for anyone familiar with the syntax and the CPU architecture, this bit of code is very intuitive.

what the assembly does

main:it is the entry, what did you expect?pushq %rbpandmovq %rsp, %rbpto setup stackxorlto initialize a variable to zero.loop:implements a loop,jgefor the end conditionmovl %ecx, %eax,cdq, andidivlcompute the remainder:

This is where the core of the program lies, you moveiinto%eax, then sign-extend for division, then you load the divisor into%esiand do youridivlwhich stores the remainder in%edx- we do this for both 5 and 3

- then we add and increment loop counter

- once its done, exit.

This bit of assembly is very readable and human-friendly, however if you wrote the equivalent C program and used a modern C compiler, you'd get something incomprehensible.

What is the GCC/Clang magic?

Well the first magic for me was how it avoids using idiv and uses a completely different approach to compute remainder. idiv is usually going to take 20-30 cycles on most modern CPUs, however a multiplication and a bit shift is nearly instant with 3-5 cycles. But how the fuck do we compute remainder with a multiplication and a shift instruction. Well, like this:

imulq $1431655766, %rax

shrq $32, %rax

That is a magic constant, for any constant divisor d, the compiler will compute a magic number, such that upon multiplication and shift by 32, it returns your quotient.

magic = floor(2^32 / d)

q ≈ (n * magic) >> 32

For some of you wizards this maybe basics of assembly optimizations, but for me this opened a whole new world of optimizations. It gets even more weird, it tries to squeeze out every bit of value. That was mostly it for the project euler problem but here are some other interesting optimizations I have come across.

The even divisor magic spell

Assume your C code has something like this:

int q = n / 6;

The compiler will factor the divisor and rewrite it as

q = (n / 3) >> 1;

and then we use the magic constant again to speed up

magic_3 = floor(2^32 / 3) = 1431655766

In the end we get something much difficult to read, but also significantly faster:

movsxd %edi, %rax # rax = n

imulq $1431655766, %rax, %rax # rax = n * magic_3

shrq $32, %rax # rax ≈ n / 3

sarl $1, %eax # rax = (n / 3) >> 1 = n / 6

I find it fascinating that compilers can go this far just for basic optimizations.

Since I was so fascinated by this, I decided to look into the compiler source code to see how they did it. And I want to share you a simple snippet from gcc/expmed.cc

unsigned HOST_WIDE_INT

choose_multiplier (unsigned HOST_WIDE_INT d, int n, int precision,

unsigned HOST_WIDE_INT *multiplier_ptr,

int *post_shift_ptr)

{

int lgup, post_shift;

int pow1, pow2;

/* lgup = ceil(log2(d)) */

lgup = ceil_log2 (d);

gcc_assert (lgup <= n);

gcc_assert (lgup <= precision);

pow1 = n + lgup;

pow2 = n + lgup - precision;

/* mlow = 2^(n + lgup) / d */

wide_int val = wi::set_bit_in_zero (pow1,

HOST_BITS_PER_DOUBLE_INT);

wide_int mlow = wi::udiv_trunc (val, d);

/* mhigh = (2^(n + lgup) + 2^(n + lgup - precision)) / d */

val |= wi::set_bit_in_zero (pow2,

HOST_BITS_PER_DOUBLE_INT);

wide_int mhigh = wi::udiv_trunc (val, d);

/* Reduce to lowest terms */

for (post_shift = lgup; post_shift > 0; post_shift--)

{

unsigned HOST_WIDE_INT ml_lo

= wi::extract_uhwi (mlow, 1,

HOST_BITS_PER_WIDE_INT);

unsigned HOST_WIDE_INT mh_lo

= wi::extract_uhwi (mhigh, 1,

HOST_BITS_PER_WIDE_INT);

if (ml_lo >= mh_lo)

break;

mlow = wi::uhwi (ml_lo,

HOST_BITS_PER_DOUBLE_INT);

mhigh = wi::uhwi (mh_lo,

HOST_BITS_PER_DOUBLE_INT);

}

*post_shift_ptr = post_shift;

if (n < HOST_BITS_PER_WIDE_INT)

{

unsigned HOST_WIDE_INT mask

= (HOST_WIDE_INT_1U << n) - 1;

*multiplier_ptr = mhigh.to_uhwi () & mask;

return mhigh.to_uhwi () > mask;

}

else

{

*multiplier_ptr = mhigh.to_uhwi ();

return wi::extract_uhwi (mhigh,

HOST_BITS_PER_WIDE_INT,

1);

}

}

What this magic spell is doing:

- computing a fixed point approximation to

1/d

mlow = 2^(n + lgup) / d

mhigh = (2^(n + lgup) + 2^(n+lgup-precision)) / d

- this is just shrinking the candidate range

mlow <= exact(2^(n+lgup) / d) < mhigh

- then it finds a multipler

mand a right shift such that

x/d == (x * m) >> post_shift

This thing alone was pretty difficult for me to read through, I still dont understand how rest of the magic has been implemented, maybe the GNU coding standards are just ass making everything unreadable or maybe the compiler just implements advanced algorithms that I am unable to comprehend. Regardless, a modern C compiler is truly a marvel of human engineering.